This research explores how students interact with computer-based testing interfaces and identifies behavioral patterns that distinguish efficient from inefficient test-taking approaches. As assessments increasingly shift from paper-based to digital formats, understanding these interaction patterns is crucial for improving test design and ensuring that these tools evaluate subject knowledge rather than digital literacy.

Research Questions

- What distinct behavioral patterns emerge in students' interaction with computer-based tests?

- Which behavioral patterns distinguish efficient from inefficient test-taking approaches?

Methodology

We analyzed process data from the 2017 National Assessment of Educational Progress (NAEP) Grade 8 Mathematics assessment, which provided detailed logs of student interactions with the testing platform. Using this rich dataset of 1,642 students, we:

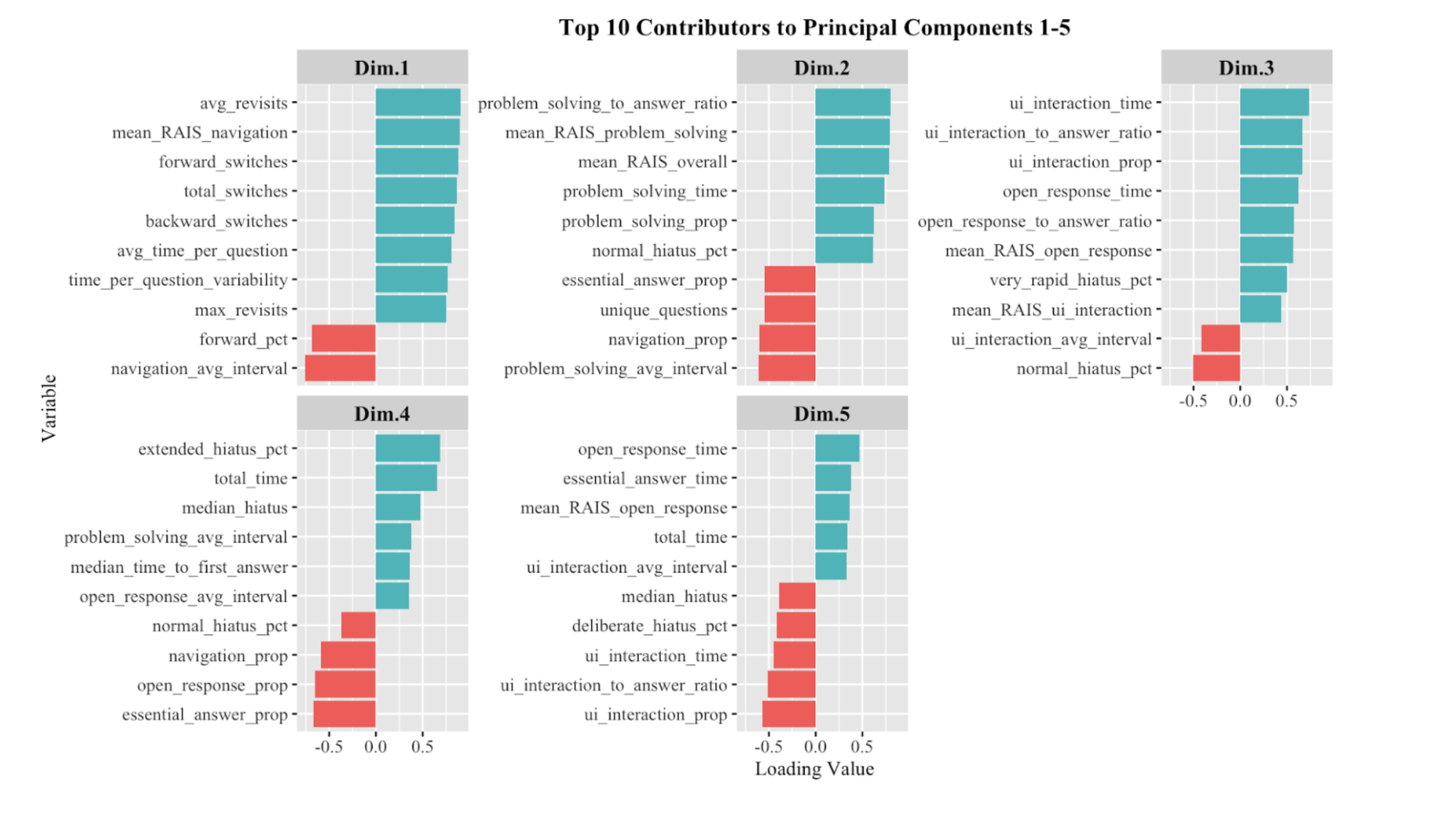

- Applied Principal Component Analysis (PCA) to identify underlying dimensions of test-taking behavior

- Feature engineered 43 variables across five categories: Interaction Patterns, Time Management, Answer Timing, Hiatus Time, and Navigation Patterns

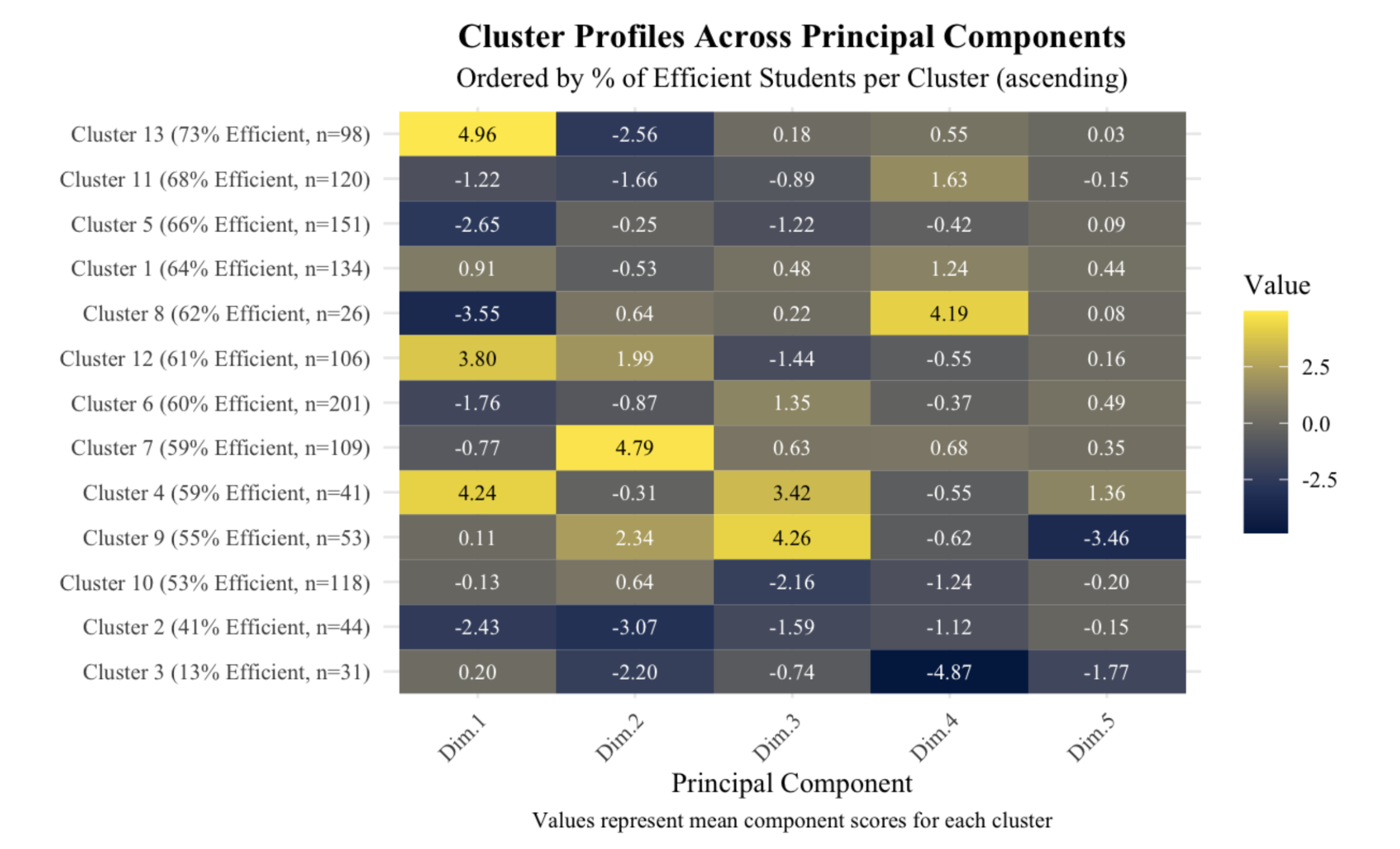

- Used Gaussian Mixture Modeling (GMM) to cluster students into groups with similar behavioral patterns

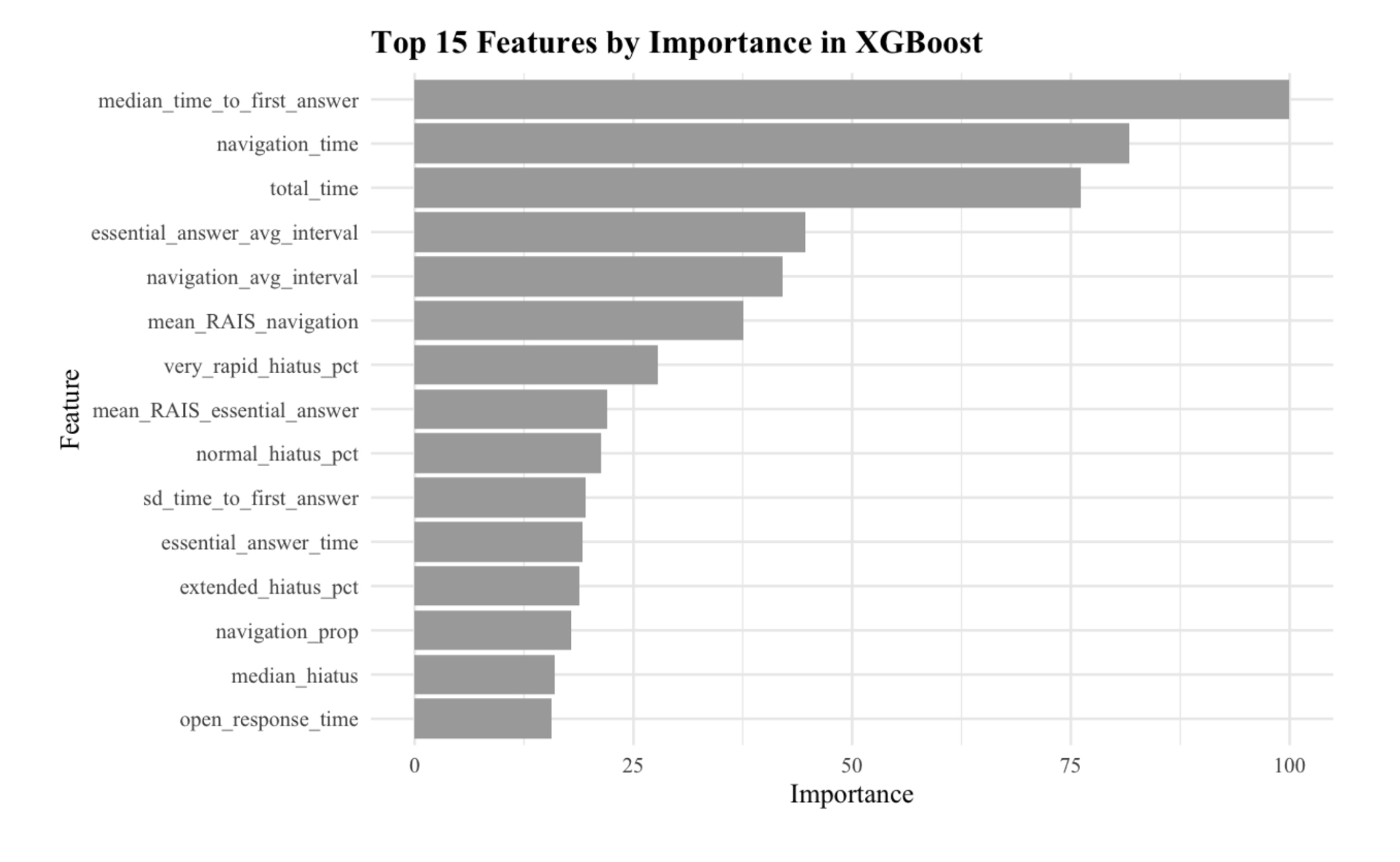

- Implemented supervised learning models (Decision Tree, Random Forest, XGBoost, and Logistic Regression) to predict test-taking efficiency

Key Findings

Our analysis identified five major behavioral dimensions:

- Navigation Intensity: How students move between test items

- Problem-Solving Tool Use: Engagement with calculators and scratch tools

- UI Interface Interaction: Time spent adjusting interface elements

- Deliberate Pausing: Thoughtful waiting before responding

- Content Interaction vs. UI: Focus on test content rather than interface

We discovered 13 distinct student clusters with varying percentages of efficient students. Notably, the “Strategic Navigators” (73% efficient students) and “Deliberate Pausers” (68% efficient students) exhibited the highest efficiency, while “Impulsive Rushers” (13% efficient students) demonstrated the lowest.

This highlighted that multiple pathways to efficiency exist, but inefficiency concentrates in specific behavioral patterns characterized by minimal pausing, high UI interaction, and low content engagement.

The supervised learning models corroborated these findings, with median time to first answer and navigation behaviors emerging as the strongest predictors of efficiency. This reinforces the importance of deliberate engagement for effective test-taking performance.

Implications

Our findings have significant implications for computer-based assessment design:

- Interface Design: Minimizing unnecessary UI complexity to reduce cognitive load and distraction

- Supporting Deliberate Engagement: Designing ways to facilitate structured pauses and thoughtful interaction

- Adaptive Testing Interfaces: Developing systems that recognize and respond to inefficient patterns

- Educational Equity: Acknowledging that digital literacy, not just content knowledge, influences performance

This research contributes to our understanding of how digital testing environments impact student performance and provides actionable insights for improving assessment design to better measure actual knowledge rather than technological proficiency.